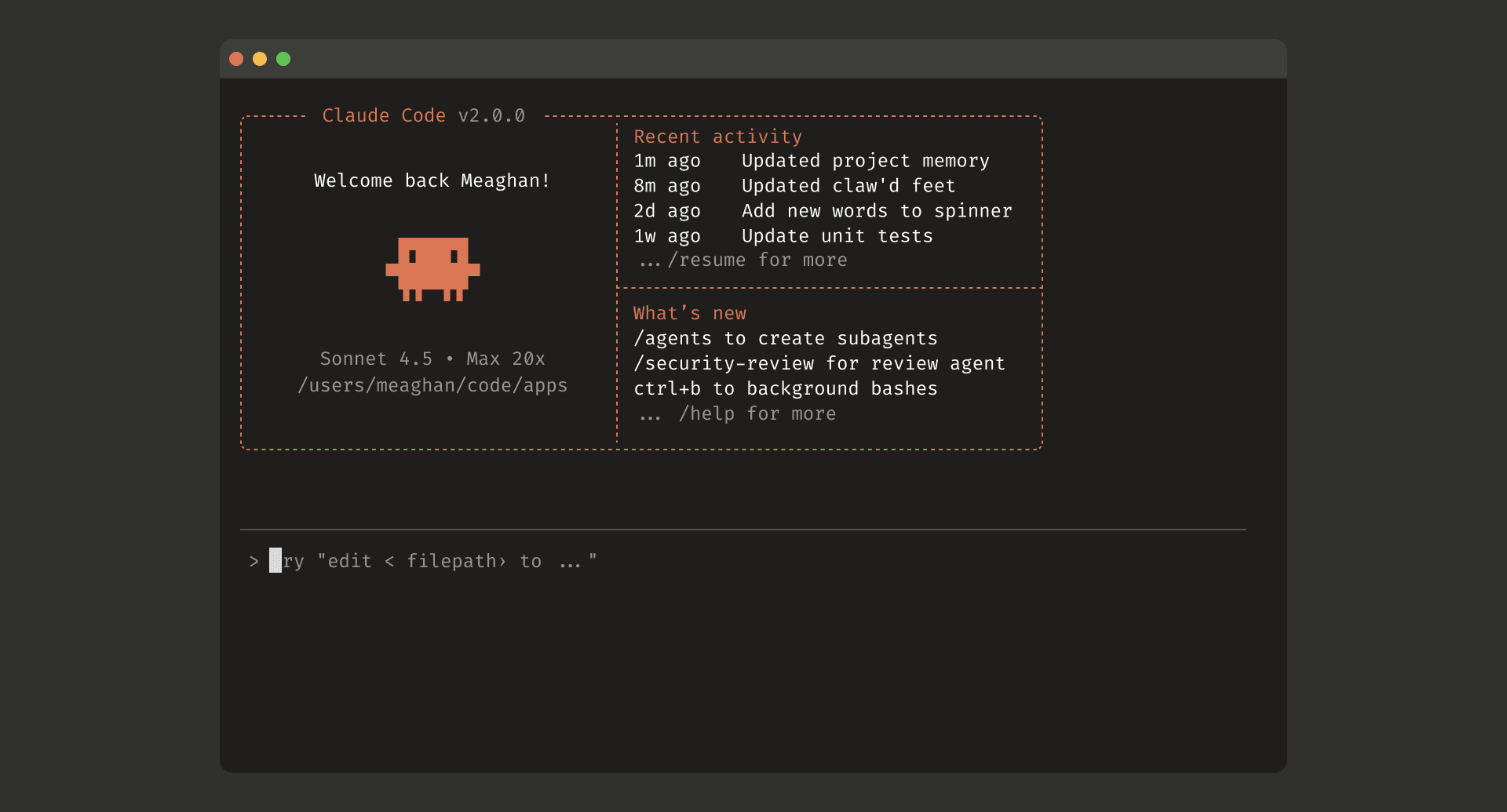

Lens correction is one of those things that sounds simple until you actually try to ship it on the web. The math exists. The database exists. But getting it into a browser bundle, loading the DB reliably, and keeping the API sane is usually where the weekend disappears.

I wanted something practical: run Lensfun in the browser, get correction maps back, and apply them in my own renderer (WebGL/WebGPU/canvas). So I packaged Lensfun into WebAssembly and wrapped it with a small, typed JS API:

- npm:

@neoanaloglabkk/lensfun-wasm - Lensfun compiled to WebAssembly

- ships the official Lensfun DB as an Emscripten

.dataasset (preloaded to/lensfun-db) - generates correction maps for geometry/distortion, TCA, and vignetting

If you already have a remap step in your pipeline, this saves you the native glue work.

Links:

- npm: https://www.npmjs.com/package/@neoanaloglabkk/lensfun-wasm

- GitHub: https://github.com/lexluthor0304/LensfunWasm

- jsDelivr (0.1.1): https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/

What it is (and what it isn’t)

It is

- Lens/camera search against the official Lensfun database

- A correction map generator you can feed into a remap stage:

- geometry/distortion (

geometry) - TCA / subpixel distortion (

tca) - vignetting gains (

vignetting)

- geometry/distortion (

It isn’t

- a full image-processing pipeline

- a “one-call” photo editor

You still decide how to sample pixels and how to blend/interpolate. The library’s job is to give you the numbers in a form that’s easy to move into a shader or a CPU remapper.

Install (bundlers)

npm install @neoanaloglabkk/lensfun-wasm

Quick start (Vite-style)

import { createLensfun } from '@neoanaloglabkk/lensfun-wasm';

import createLensfunCoreModule from '@neoanaloglabkk/lensfun-wasm/core';

import wasmUrl from '@neoanaloglabkk/lensfun-wasm/core-wasm?url';

import dataUrl from '@neoanaloglabkk/lensfun-wasm/core-data?url';

const client = await createLensfun({

moduleFactory: createLensfunCoreModule,

wasmUrl,

dataUrl,

});

const lenses = client.searchLenses({ lensModel: 'pEntax 50-200 ED' });

console.log(lenses[0]);

What’s happening here:

coreprovides the Emscripten module factory.core-wasmandcore-dataare static assets, served by your app.createLensfun()initializes the DB by default (autoInitDb: true).

Quick start (CDN / jsDelivr)

Pin versions in production. Example for 0.1.1:

<script src="https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/dist/assets/lensfun-core.js"></script>

<script src="https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/dist/umd/index.iife.js"></script>

<script>

(async () => {

const client = await LensfunWasm.createLensfun();

const lenses = client.searchLenses({ lensModel: 'pEntax 50-200 ED' });

console.log(lenses[0]);

})();

</script>

Generate correction maps

The workflow is simple:

- Search lenses/cameras.

- Pick a match and keep its

handle. - Build correction maps using your image parameters.

- Apply those maps in your renderer.

Here’s a compact example:

import {

LF_MODIFY_TCA,

LF_MODIFY_VIGNETTING,

createLensfun,

} from '@neoanaloglabkk/lensfun-wasm';

const client = await createLensfun(/* ... */);

const [lens] = client.searchLenses({ lensModel: 'pEntax 50-200 ED' });

if (!lens) throw new Error('No lens match');

const width = 4000;

const height = 3000;

const focal = 70;

const crop = lens.cropFactor; // or your camera crop factor

const mods = client.getAvailableModifications(lens.handle, crop);

const maps = client.buildCorrectionMaps({

lensHandle: lens.handle,

width,

height,

focal,

crop,

// Grid spacing in pixels. Larger = smaller maps + faster build.

// Your renderer usually interpolates between grid points.

step: 8,

includeTca: Boolean(mods & LF_MODIFY_TCA),

includeVignetting: Boolean(mods & LF_MODIFY_VIGNETTING),

aperture: 5.6, // required when includeVignetting=true

});

Map shapes (so you know what you’re moving around):

geometry: Float32Arraylength =gridW * gridH * 2([x0, y0, x1, y1, ...])tca?: Float32Arraylength =gridW * gridH * 6([rx, ry, gx, gy, bx, by, ...])vignetting?: Float32Arraylength =gridW * gridH * 3([rGain, gGain, bGain, ...])

Practical notes

- Reuse a single

LensfunClientif you can. DB init is the expensive part. stepis your main performance dial. Start with8or16for preview, then tighten it for export.- Call

client.dispose()when you’re done to release native DB memory. - License:

LGPL-3.0-or-later. If you’re distributing this in a product, check how you want to handle compliance.

镜头矫正这件事,只有在“真的要在网页里上线”时才会显得麻烦:算法不缺、数据库不缺,缺的是前端可落地的一套打包方式和一个不别扭的 API。

我想要的目标很具体:

- Lensfun 在浏览器里跑

- 直接拿到矫正 map(而不是一堆 native 细节)

- 最后怎么 remap,交给我自己的渲染管线(WebGL/WebGPU/canvas)

所以做了这个包:@neoanaloglabkk/lensfun-wasm。

它把 Lensfun 编译成 WebAssembly,并把官方镜头数据库打包成 Emscripten .data 资源(默认预载到 /lensfun-db)。你在前端拿到的,就是可以直接用的 map。

链接:

- npm:https://www.npmjs.com/package/@neoanaloglabkk/lensfun-wasm

- GitHub:https://github.com/lexluthor0304/LensfunWasm

- jsDelivr(0.1.1):https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/

它是什么(以及它不是什么)

它是

- 官方 Lensfun 数据库的查询与匹配(搜镜头、搜机身)

- 生成矫正 map 的工具,方便接入 remap 流程:

- 几何/畸变(

geometry) - 横向色差 / subpixel distortion(

tca) - 暗角增益(

vignetting)

- 几何/畸变(

它不是

- 一套完整的像素处理管线

- “一键修图”的成品滤镜

你依然要决定采样方式、插值策略、渲染路径。这个库做的是把 Lensfun 的结果变成前端好用的数据结构。

安装(Bundler)

npm install @neoanaloglabkk/lensfun-wasm

快速开始(以 Vite 为例)

import { createLensfun } from '@neoanaloglabkk/lensfun-wasm';

import createLensfunCoreModule from '@neoanaloglabkk/lensfun-wasm/core';

import wasmUrl from '@neoanaloglabkk/lensfun-wasm/core-wasm?url';

import dataUrl from '@neoanaloglabkk/lensfun-wasm/core-data?url';

const client = await createLensfun({

moduleFactory: createLensfunCoreModule,

wasmUrl,

dataUrl,

});

补充几点:

core提供 Emscripten module factory。.wasm和.data作为静态资源由你的应用托管。createLensfun()默认会初始化数据库(autoInitDb: true)。

CDN(jsDelivr)

生产环境建议固定版本。以下用 0.1.1:

<script src="https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/dist/assets/lensfun-core.js"></script>

<script src="https://cdn.jsdelivr.net/npm/@neoanaloglabkk/lensfun-wasm@0.1.1/dist/umd/index.iife.js"></script>

<script>

(async () => {

const client = await LensfunWasm.createLensfun();

const lenses = client.searchLenses({ lensModel: 'pEntax 50-200 ED' });

console.log(lenses[0]);

})();

</script>

生成矫正 map(核心用法)

实际接入时,我一般按这个顺序来:

- 搜镜头/机身

- 选一个匹配结果(拿到

handle) - 用图像参数生成 map

- 在渲染层做 remap

示例:

import {

LF_MODIFY_TCA,

LF_MODIFY_VIGNETTING,

createLensfun,

} from '@neoanaloglabkk/lensfun-wasm';

const client = await createLensfun(/* ... */);

const [lens] = client.searchLenses({ lensModel: 'pEntax 50-200 ED' });

if (!lens) throw new Error('No lens match');

const width = 4000;

const height = 3000;

const focal = 70;

const crop = lens.cropFactor;

const mods = client.getAvailableModifications(lens.handle, crop);

const maps = client.buildCorrectionMaps({

lensHandle: lens.handle,

width,

height,

focal,

crop,

// 网格步长(像素)。越大越快、数据越小,

// 渲染侧通常需要在网格点之间做插值。

step: 8,

includeTca: Boolean(mods & LF_MODIFY_TCA),

includeVignetting: Boolean(mods & LF_MODIFY_VIGNETTING),

aperture: 5.6, // includeVignetting=true 时必填

});

map 的数据规模(方便你提前估算传输/纹理大小):

geometry: Float32Array长度 =gridW * gridH * 2([x0, y0, x1, y1, ...])tca?: Float32Array长度 =gridW * gridH * 6([rx, ry, gx, gy, bx, by, ...])vignetting?: Float32Array长度 =gridW * gridH * 3([rGain, gGain, bGain, ...])

经验小结

- 尽量复用一个

LensfunClient,别频繁初始化数据库。 step是你最重要的性能旋钮:预览可以从8/16起步,需要更细再往下调。- 用完记得

client.dispose(),它会释放 WASM 侧的数据库内存。 - License 是

LGPL-3.0-or-later,如果你要做商业分发,建议提前想清楚合规方式。

コメントを残す